Elon’s 5-Step Algorithm Exposes Why Most Enterprise AI FAILS!

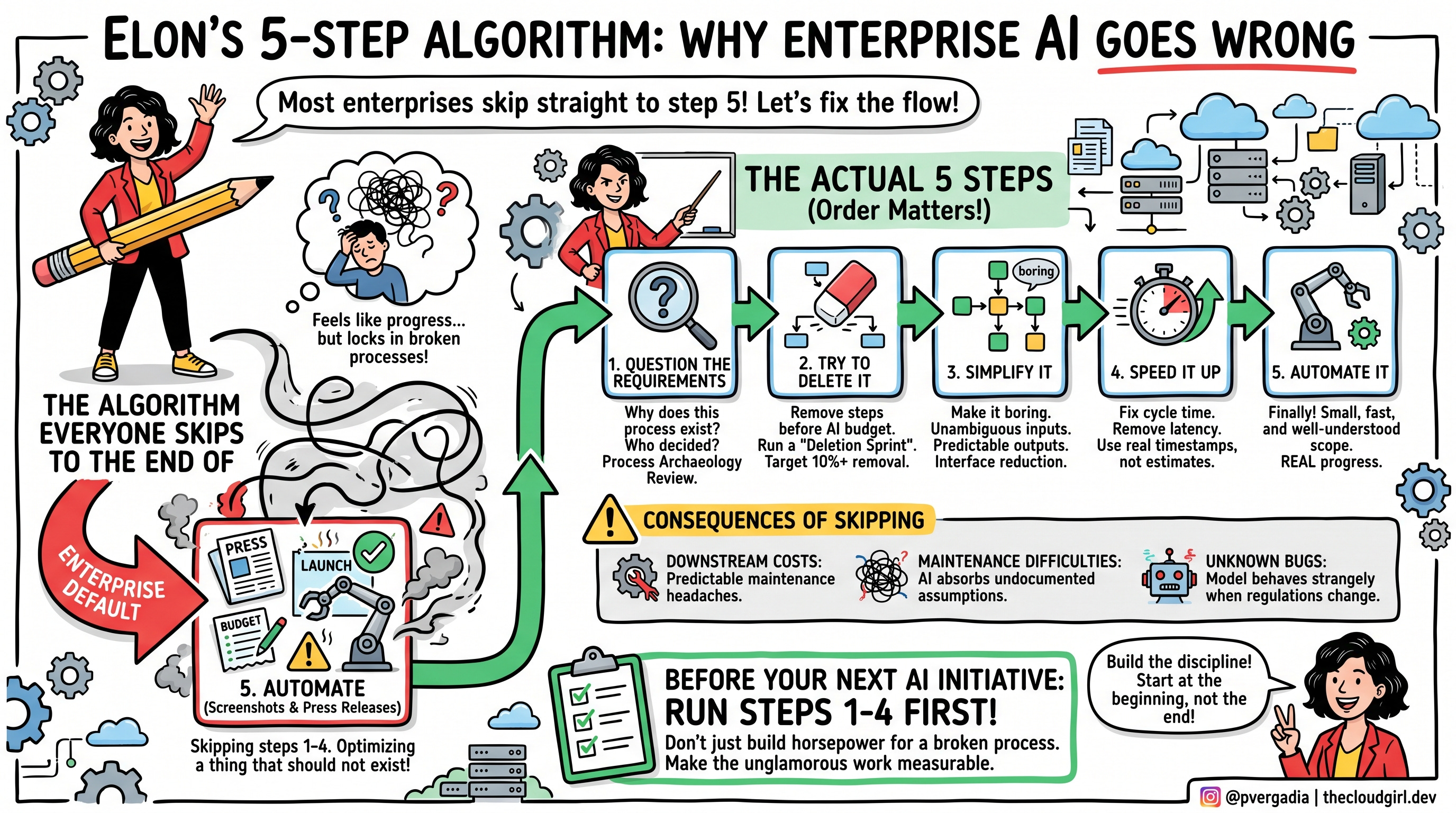

A few months ago I was watching Elon Musk on a podcast, and he walked through something he calls his five-step algorithm for solving any problem. The steps are: question the requirements, try to delete it, simplify it, speed it up, then automate it. He was pretty blunt about why the order matters. He said he’s “gone backwards so many times” by automating something first, only to later realize it shouldn’t have existed at all.

I couldn’t stop thinking about that. Because I’ve spent enough time inside enterprise AI initiatives to recognize the pattern immediately. Almost every company I’ve seen is skipping straight to step five. Not occasionally. Consistently. As a default.

They’re not using AI to solve problems. They’re using AI to lock in their worst ones.

The Algorithm Everyone Skips To The End Of

Musk explained this on the podcast in a way that stuck with me. He said the most common mistake smart engineers make is “to optimize a thing that should not exist.” The sequence he described isn’t arbitrary. Each step only makes sense after the one before it. You don’t simplify until you’ve tried to delete. You don’t speed up until you’ve simplified. You don’t automate until you’ve done all of it.

He was talking about physical manufacturing, SpaceX rockets, car assembly lines. But swap in “enterprise workflow” anywhere and it holds up perfectly.

The problem with jumping straight to automation is that it feels like progress. It looks like progress. There’s a demo. There’s a budget line. There’s a launch announcement. You can walk a board through an AI system in a way you cannot walk them through “we spent three months questioning whether this process should exist and then deleted most of it.” One of those has screenshots. The other one just has better outcomes.

So enterprises skip to the screenshots.

What You Actually Get When You Do That

Picture a company that processes vendor invoices. The process has lived inside the finance team for fifteen years. It involves four approval stages, two handoffs between departments, a data entry step that exists because an old ERP system couldn’t read PDFs, and a sign-off from a VP that was added after a fraud incident in 2011 involving a vendor who no longer exists.

Now the company builds an AI system to handle invoice processing. The AI is fast, accurate, and available around the clock. It is also doing all four approval stages, both handoffs, the redundant data entry step, and requesting that VP sign-off — just via automated email now instead of a calendar invite.

Nobody asked why those steps were there. Nobody tried to remove any of them. The process that took three days now takes four hours. Leadership calls it a transformation. The finance team knows something feels off but can’t quite name it.

What they can’t name is this: you didn’t fix the process. You just gave it more horsepower.

The downstream costs are predictable once you know to look for them. Maintenance gets difficult because the AI has absorbed undocumented assumptions from the original process. When regulations change, the model behaves strangely in ways that take weeks to diagnose. And because the automation is impressive to watch, it becomes politically hard to question. Criticizing the AI system feels like criticizing AI, when the actual problem is a fifteen-year-old workflow nobody ever bothered to audit.

Elon’s 5-Step Algorithm Exposes Why Most Enterprise AI Is Backwards

A few months ago I was watching Elon Musk on a podcast, and he walked through something he calls his five-step algorithm for solving any problem. The steps are: question the requirements, try to delete it, simplify it, speed it up, then automate it. He was pretty blunt about why the order matters. He said he’s “gone backwards so many times” by automating something first, only to later realize it shouldn’t have existed at all.

I couldn’t stop thinking about that. Because I’ve spent enough time inside enterprise AI initiatives to recognize the pattern immediately. Almost every company I’ve seen is skipping straight to step five. Not occasionally. Consistently. As a default.

They’re not using AI to solve problems. They’re using AI to lock in their worst ones.

The Algorithm Everyone Skips

Musk explained this on the podcast in a way that stuck with me. He said the most common mistake smart engineers make is “to optimize a thing that should not exist.” The sequence he described isn’t arbitrary. Each step only makes sense after the one before it. You don’t simplify until you’ve tried to delete. You don’t speed up until you’ve simplified. You don’t automate until you’ve done all of it.

He was talking about physical manufacturing, SpaceX rockets, car assembly lines. But swap in “enterprise workflow” anywhere and it holds up perfectly.

The problem with jumping straight to automation is that it feels like progress. It looks like progress. There’s a demo. There’s a budget line. There’s a launch announcement. You can walk a board through an AI system in a way you cannot walk them through “we spent three months questioning whether this process should exist and then deleted most of it.” One of those has screenshots. The other one just has better outcomes.

So enterprises skip to the screenshots.

What You Actually Get When You Do That

Picture a company that processes vendor invoices. The process has lived inside the finance team for fifteen years. It involves four approval stages, two handoffs between departments, a data entry step that exists because an old ERP system couldn’t read PDFs, and a sign-off from a VP that was added after a fraud incident in 2011 involving a vendor who no longer exists.

Now the company builds an AI system to handle invoice processing. The AI is fast, accurate, and available around the clock. It is also doing all four approval stages, both handoffs, the redundant data entry step, and requesting that VP sign-off — just via automated email now instead of a calendar invite.

Nobody asked why those steps were there. Nobody tried to remove any of them. The process that took three days now takes four hours. Leadership calls it a transformation. The finance team knows something feels off but can’t quite name it.

What they can’t name is this: you didn’t fix the process. You just gave it more horsepower.

The downstream costs are predictable once you know to look for them. Maintenance gets difficult because the AI has absorbed undocumented assumptions from the original process. When regulations change, the model behaves strangely in ways that take weeks to diagnose. And because the automation is impressive to watch, it becomes politically hard to question. Criticizing the AI system feels like criticizing AI, when the actual problem is a fifteen-year-old workflow nobody ever bothered to audit.

What The Five Steps Actually Look Like Inside an Enterprise

I want to be concrete here because these steps get talked about at a level of abstraction that makes them easy to nod at and then ignore.

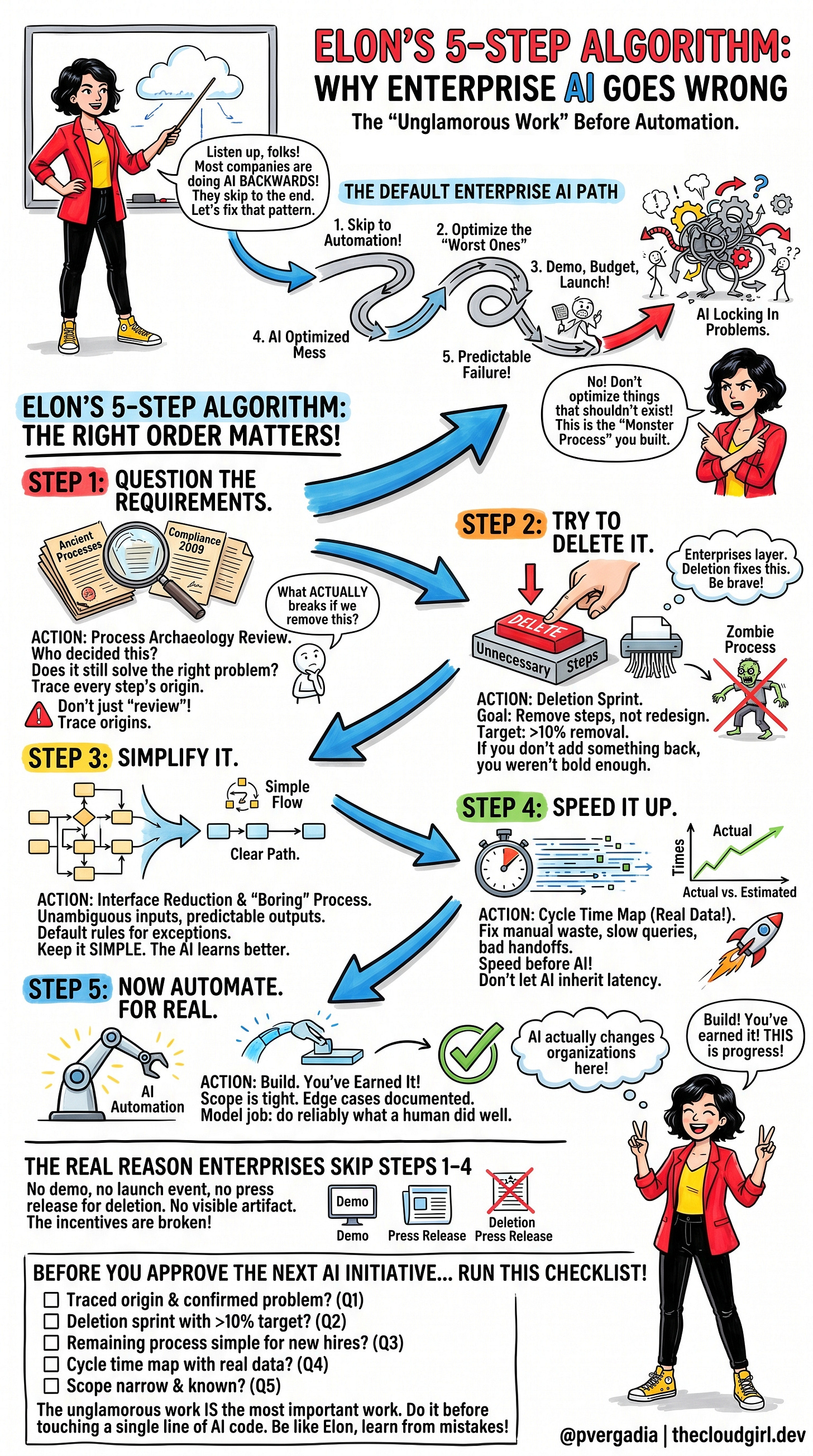

Step 1: Actually question why this process exists

Not “let’s review the process.” That almost always turns into documentation, which makes the process feel more legitimate rather than less. The real question is: who decided this needed to exist, and what were they trying to solve? Requirements don’t just decay, they quietly get superseded, worked around, and forgotten. A compliance step from 2009 may have been rendered irrelevant by a regulation change in 2016 that nobody on the current team knows about.

The practice that makes this real is what I’d call a process archaeology review. A small cross-functional team, two weeks, one mandate: trace the origin of every step in the process being targeted for AI. The only output that matters is a document answering: what breaks if we remove this tomorrow? Not what might break. What actually breaks.

Step 2: Try to delete things before the AI budget gets approved

Musk’s framing on the podcast was blunt. If you’re not being forced to add back at least 10% of what you deleted, you didn’t delete enough. Most people feel like they’ve succeeded when they haven’t had to restore anything. He thinks that means they were too conservative.

Enterprises almost never delete. They layer. New system runs alongside old system. New workflow gets added without retiring the one it was meant to replace. Both stay active indefinitely because nobody owns the decision to kill either.

The deletion sprint fixes this. Before any AI project gets scoped, the team runs a sprint with one explicit goal: remove steps. Not optimize them, not redesign them. Remove them. The health metric is what percentage of the original process survived. If it’s above 80%, the team wasn’t serious about deleting.

This is uncomfortable because the people who built the original process are usually still in the room. The deletion sprint needs someone senior enough to make it safe for the team to actually cut things. Without that cover, it will quietly fail and everyone will call it a success anyway.

Step 3: Simplify what’s left until it’s boring

After deletion, you have the process that genuinely needs to exist. Now you make it simple. Not elegant, not optimized. Boring. Unambiguous inputs. Predictable outputs. Decision branches reduced to the handful that cover 95% of real cases. The rare exceptions get a default rule, not a complex logic tree.

Boring processes are what AI actually learns reliably. The messier the process, the messier the training data, the messier the model behavior. Simplification is not preparation for AI. It is what makes the AI work.

The concrete practice is interface reduction. Every input field that doesn’t directly drive a downstream decision gets cut. Every conditional branch that handles less than 5% of real volume gets a default. The goal is a process so simple a new hire can follow it on day one without asking anyone anything.

Step 4: Make it fast before you automate it

Speed means cycle time: the elapsed time between a process starting and producing an output. In a manual process, the waste is usually visible once you look at actual timestamps. A step that takes two minutes of active work but sits in someone’s inbox for two days. A query that runs for 45 seconds when it should take two. A handoff that adds half a day because of how two departments schedule their syncs.

Fix those before automation. If you don’t, the AI inherits the latency and you spend months blaming inference time for slowness that has nothing to do with the model.

Build a cycle time map using real timestamps from existing logs, not estimates from process owners. Process owners will almost always underestimate where time actually disappears, partly because they’re not watching closely, and partly because knowing would be uncomfortable.

Step 5: Now automate. For real.

By this point, what you’re automating is small, fast, well-understood, and boring in the best possible way. The scope is tight. The edge cases are documented. The model’s job is to do reliably what a human was already doing well, not to compensate for a process that was broken before the AI arrived.

This is where AI actually changes organizations. Not because it’s clever, but because it’s applied to something that deserved to be automated.

The Real Reason Enterprises Skip Steps One Through Four

It’s not ignorance. Most enterprise technology leaders would agree with the five-step sequence if you laid it out for them. The problem is structural.

Steps one through four produce no visible artifact. There’s no demo. No launch event. No press release that reads “we deleted 60% of our procurement process and nothing broke.” The AI press release exists. The deletion press release doesn’t.

This creates a real incentive to skip. Teams that jump to automation get budget, headcount, and recognition. Teams that spend months questioning requirements and running deletion sprints look, from the outside, like they’re not doing anything. They are doing the most important work. But the organization isn’t measuring it, so it doesn’t count.

The fix is making the first four steps measurable. A process health score that tracks deletion rate, simplification index, and cycle time improvement alongside model accuracy and deployment velocity. When those numbers exist, people work toward them. When they don’t, everyone races to the demo.

Before You Approve the Next AI Initiative

Run through this first.

QuestionIf No: Stop HereHas someone traced the origin of this process and confirmed it still solves the right problem?Question requirements firstHas a deletion sprint been run with a documented target of 10%+ removal?Delete before scoping AIIs the remaining process simple enough that a new hire follows it in under an hour?Simplify before building modelsHas a cycle time map been built from actual timestamps?Optimize speed before automatingIs the automation scope narrow, with known inputs, outputs, and edge cases?Build. You’ve earned it.

If you’re already mid-initiative and haven’t done steps one through four, the move is not to stop. Run them in parallel, apply what you learn retroactively, and build the discipline into the next one so you start at the beginning instead of the end.

The thing Musk said on that podcast that I keep coming back to is that he learned this the hard way. He automated things, then sped them up, then simplified them, then deleted them. He got tired of doing it in the wrong order. So he made the order a mantra.

Enterprises haven’t been burned enough yet to do the same. Most are still on the early automation highs, still writing press releases, still a year or two away from discovering they’ve spent serious money optimizing something that should not exist.

The ones who figure it out first will have a real advantage. Not because of better models or bigger budgets. Because they did the unglamorous work before touching a single line of AI code.